Speaker

Description

Model-based between-shots and real-time actuator trajectory planning will be critical to achieving high performance scenarios and reliable, disruption-free operation in present-day tokamaks, ITER, and future fusion reactors. Key to the success of such tools is the availability of models that are both accurate enough to facilitate useful decision making and fast enough to enable optimization algorithms to meet between-shots and real-time deadlines. While state-of-the-art integrated modeling codes come close to the accuracy and completeness needed for these applications, they are too computationally intensive. To address this problem, a novel accelerated simulation capability has been developed for NSTX-U by applying machine learning techniques to both empirical data and TRANSP simulations, enabling profile and equilibrium predictions at real-time relevant time scales. The approach includes machine learning surrogates for high-fidelity TRANSP modules that accelerate calculations by orders of magnitude while maintaining high-fidelity. For quantities that are not accurately modeled by TRANSP modules, machine learning is applied to an experimental database to create empirical models. By incorporating accelerated physics models, rather than training models entirely based on data, the combined models are expected to perform better when projecting beyond operating points that have already been realized. By incorporating empirical data based models, the combined models can achieve high accuracy and continue to learn as new experimental data is obtained. Presented results provide a glimpse of the potential impact of accelerated modeling on scenario optimization and control, motivating further development of models and applications.

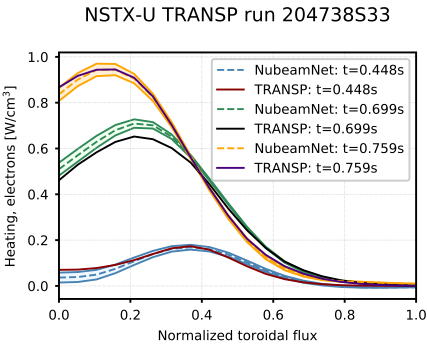

Surrogate models for TRANSP calculations: One of the most accurate, but also time-consuming, calculations in TRANSP is NUBEAM, a Monte Carlo code that calculates the influence of neutral beam injection on plasma heating, current drive, and torque. In (1) an accelerated surrogate model for NUBEAM was developed by generating a large database of NUBEAM results for plasma conditions relevant to the NSTX-U operating space. An ensemble approach is used, in which multiple neural networks are trained on different subsets of the training data, and the output of the model uses the average of the neural network predictions. A comparison of NUBEAM predicted heating and current profiles with those predicted by the neural network is shown in Figure 1, showing good agreement at different times during a TRANSP run that was not in training. Importantly, the neural network only takes ~100 microseconds to evaluate compared to seconds or minutes for the original code. Surrogate models have also been developed for parameters used to evaluate the magnetic and momentum diffusion equations. A fast neural network has also been developed to generate plasma equilibria from coil currents and pressure and current profiles.

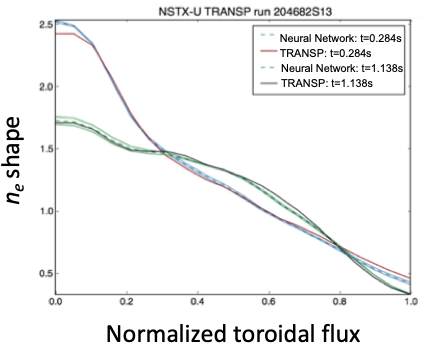

Empirically identified models: While a great deal of progress has been made in theoretical understanding and computational modeling of the turbulence that dominates transport in tokamak plasmas, there is much work to be done to enable consistent, accurate predictions of the evolution of these profiles from first-principles. Accuracy aside, these models are far too computationally intensive for use in optimization and real-time applications. While recent work has shown that models for transport coefficients can be accelerated through the use of neural networks (2,3), we take an alternative data-driven approach that is fast and can reproduce experimental profile evolution well enough shot planning and control applications. Since the shape of the temperature and density profiles are typically observed to be `stiff', i.e., insensitive to the detailed distribution of sources, the electron temperature and pressure profile shapes are modeled as the output of a neural network trained on the shape of the electron density and temperature profiles measured during the 2016 NSTX-U experimental campaign. The model uses plasma current, plasma boundary shaping parameters, volume-averaged electron density and pressure as input and is developed using the same techniques described in (1). As demonstrated in the example results shown in Figure 2, the model is able to accurately reproduce the shape of electron density profiles on NSTX-U. Volume averaged stored energy and density are then predicted from energy and particle balance using empirical confinement scaling expressions.

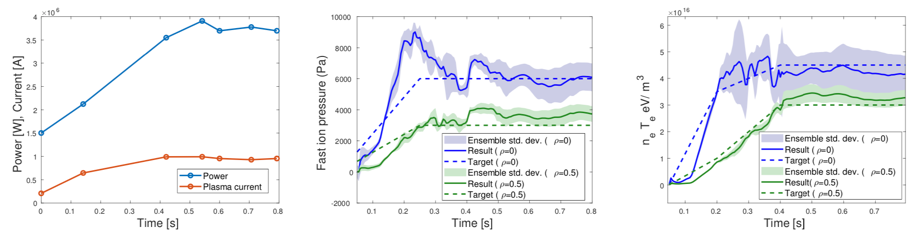

Actuator trajectory optimization: The fast execution time of the machine learning accelerated scenario evolution model is exploited to enable rapid optimization of actuator trajectories to track a target evolution of fast ion and thermal pressure. To handle the nonlinearity of the problem, genetic optimization is used to find to find a good candidate solution, followed by sequential quadratic programming to refine the solution. Example results of applying the optimization approach are shown in Figure 3. The plasma current and total beam power requests are ramped up to around 1MA and 3.6MW, respectively, and result in good tracking of the target trajectories for both fast ion pressure and electron pressure. Constraints on individual and total beam powers were considered, along with constraints on the plasma current magnitude and ramp rate. Importantly, the optimization penalizes large model ensemble standard deviations (depicted as shaded regions) to help ensure the obtained results are from the reliably modeled operating space. Future work will include further development of optimization techniques, and real-time applications.

Work supported by US Department of Energy Contract No. DE-AC02-09CH11466.

(1) M. D. Boyer, et al., Nuclear Fusion 2019 59 056008.

(2) O. Meneghini, et al., Nuclear Fusion 2017 57 086034.

(3) J. Citrin, et al., Nuclear Fusion 2015 55 092001.

| Affiliation | Princeton Plasma Physics Laboratory |

|---|---|

| Country or International Organization | United States |